What are Embeddings?

Embeddings are dense vector representations that map text, images, or code into high-dimensional space where semantic similarity corresponds to geometric proximity.

Modern AI and search systems work with meaning, not just words. When a user searches for "cancel my subscription," the system should also find documents about "account termination" -- even though the two phrases share no words. Embeddings make this possible by converting text (or images, code, or any data) into numerical vectors where similar meanings are close together in vector space.

An embedding is a list of numbers -- typically 384 to 3072 floating-point values -- that represents the semantic content of a piece of text. These vectors are produced by embedding models trained on large text corpora, where the training objective ensures that semantically similar inputs produce vectors that are geometrically close (as measured by cosine similarity or dot product) in high-dimensional space.

What Embeddings Represent

Each dimension in an embedding vector captures some aspect of meaning, but unlike hand-crafted features, these dimensions are learned automatically and don't correspond to human-interpretable concepts. The key property is that the geometric relationships between vectors reflect semantic relationships between inputs:

- "king" - "man" + "woman" produces a vector close to "queen"

- "Python" and "JavaScript" are closer together than "Python" and "photosynthesis"

- "How do I reset my password?" and "I forgot my login credentials" produce similar vectors

This property emerges from training. Embedding models learn to compress the statistical patterns of language into dense vectors such that inputs appearing in similar contexts (and thus having similar meanings) end up near each other in vector space.

How Embedding Models Work

Transformer Encoders

Most modern embedding models are based on encoder-only transformers derived from the BERT architecture. The process works as follows:

- Tokenization: Input text is split into subword tokens using a vocabulary (e.g., WordPiece, BPE). "Kubernetes deployment" might become

["kubernetes", "deploy", "##ment"]. - Token encoding: Each token is mapped to a learned vector, and positional encodings are added.

- Self-attention layers: Multiple transformer layers process the token vectors, with each layer's self-attention mechanism allowing every token to attend to every other token. This builds contextual representations -- the vector for "bank" differs depending on whether the surrounding context is about finance or rivers.

- Pooling: The final token representations are combined into a single vector representing the entire input. Common pooling strategies include CLS token pooling (using the special classification token's output), mean pooling (averaging all token vectors), and max pooling.

Sentence Transformers

Raw BERT embeddings are not optimized for semantic similarity -- a sentence like "A dog sits on a bench" and "A dog is sitting outside" might produce dissimilar vectors despite having similar meanings. Sentence transformer models (like those from the sentence-transformers library) fine-tune BERT-style encoders using contrastive learning objectives.

During training, the model is shown pairs of similar and dissimilar sentences. It learns to produce embeddings where similar pairs have high cosine similarity and dissimilar pairs have low cosine similarity. This training process -- often using triplet loss or contrastive loss -- transforms a general-purpose language model into one that produces semantically meaningful embeddings.

Embedding Dimensions

Embedding models produce vectors of a fixed size, called the embedding dimension. Common dimensions include:

| Model | Dimensions | Context |

|---|---|---|

| OpenAI text-embedding-3-small | 1536 | General purpose |

| OpenAI text-embedding-3-large | 3072 | Higher quality |

| Cohere embed-v3 | 1024 | Multilingual |

| BGE-large-en-v1.5 | 1024 | Open-source |

| E5-large-v2 | 1024 | Open-source |

| all-MiniLM-L6-v2 | 384 | Lightweight |

Higher dimensions generally capture more nuanced meaning but require more storage and compute for similarity calculations. A 768-dimensional embedding for a single text chunk uses 3,072 bytes (768 x 4 bytes per float32). At scale -- millions of documents with multiple chunks each -- embedding storage becomes a significant consideration.

How Embeddings Enable AI Applications

Semantic Search

The most direct application of embeddings is vector search. Documents are embedded at index time, queries are embedded at search time, and the nearest vectors in the index are returned as results. This captures meaning rather than keywords -- "cancel subscription" matches "account termination" because their embedding vectors are close together.

Retrieval-Augmented Generation (RAG)

RAG systems use embeddings to retrieve relevant context before generating an answer. The query is embedded, similar document chunks are retrieved via vector search, and the retrieved text is passed to a language model as context. Embedding quality directly determines retrieval quality, which in turn determines answer quality.

Clustering and Classification

Embeddings enable unsupervised clustering (grouping similar documents without labeled data) and few-shot classification (categorizing documents with only a handful of examples per category). Because embeddings capture meaning, a simple k-nearest-neighbors classifier over embedding space can achieve strong results without task-specific model training.

Embeddings vs. Keyword Matching

The fundamental difference is that keyword matching operates on surface-level token overlap, while embeddings operate on learned semantic representations:

| Aspect | Keyword Matching (BM25) | Embeddings |

|---|---|---|

| Matching basis | Exact token overlap | Semantic similarity |

| "cancel subscription" vs. "account termination" | No match | High similarity |

| Exact identifiers (error codes, IDs) | Strong match | Weak match |

| Computational cost | Low (inverted index lookup) | Higher (vector computation) |

| Storage | Inverted index | Dense vectors (KB per document) |

| Interpretability | High (which terms matched) | Low (opaque vector space) |

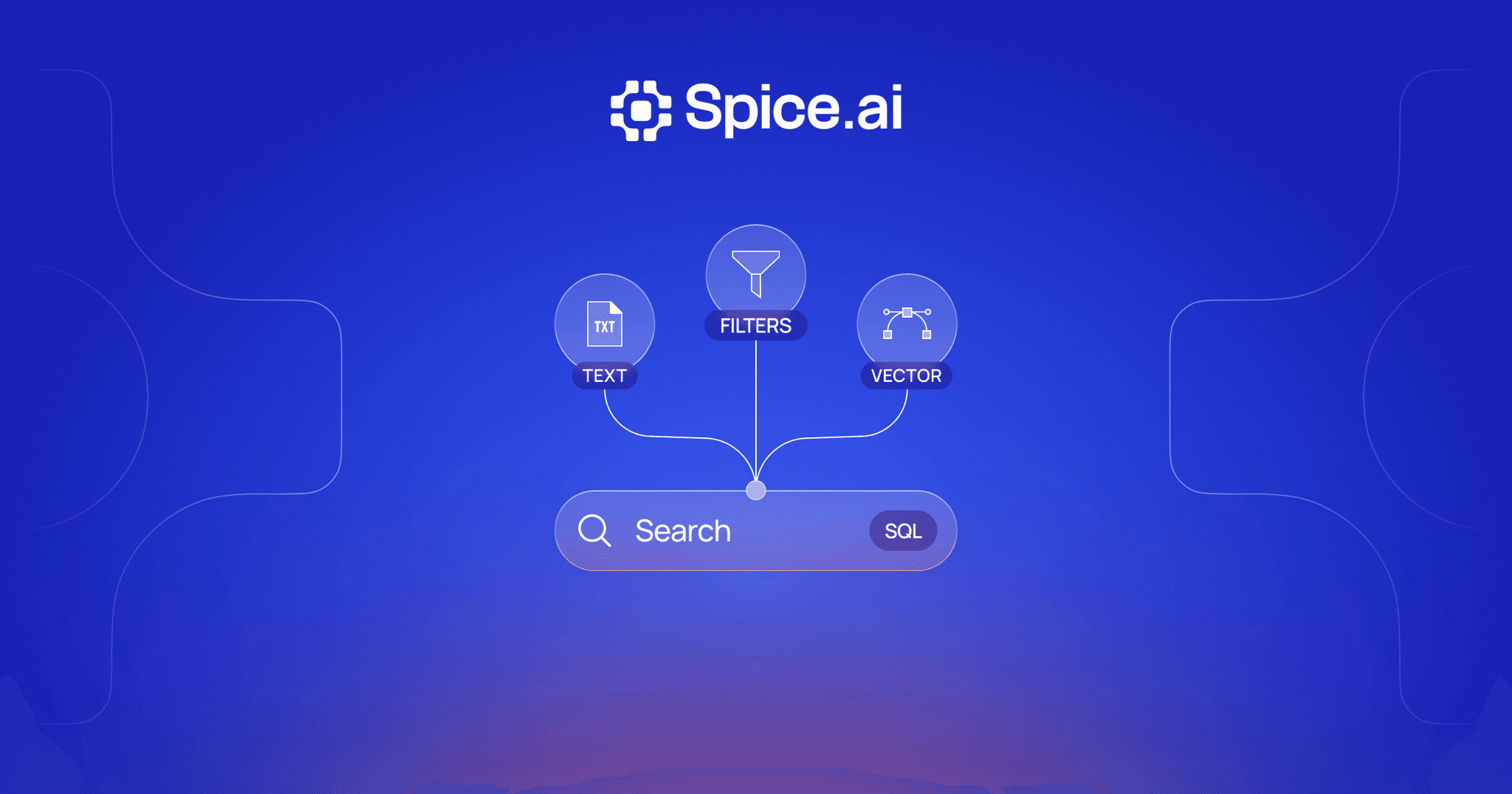

In practice, combining both approaches through hybrid search delivers better results than either alone. BM25 full-text search handles exact terms and identifiers, while embeddings capture meaning and handle vocabulary mismatch.

Choosing Embedding Models

Selecting an embedding model involves tradeoffs between quality, cost, latency, and operational complexity:

Commercial APIs (OpenAI, Cohere, Google) offer high-quality embeddings with simple API calls. The tradeoffs are per-token cost, network latency, vendor dependency, and sending data to external services. OpenAI's text-embedding-3 models and Cohere's embed-v3 are strong general-purpose choices.

Open-source models (BGE, E5, GTE, all-MiniLM) can run locally, eliminating cost and latency concerns. The MTEB (Massive Text Embedding Benchmark) leaderboard is the standard reference for comparing model quality across tasks. Open-source models have closed much of the quality gap with commercial options, especially for English-language tasks.

Key factors to evaluate:

- Quality on your domain: General benchmarks don't always predict performance on domain-specific data. Test with your actual queries and documents.

- Latency requirements: Smaller models (384 dimensions) embed text faster than larger ones (3072 dimensions), which matters for real-time search.

- Multilingual support: If your data spans languages, choose a model trained on multilingual data (e.g., Cohere embed-v3, multilingual-e5-large).

- Max token length: Models have a maximum context window (typically 512 tokens). Longer documents must be chunked before embedding.

Embeddings with Spice

Spice integrates embedding generation alongside SQL queries and hybrid search in a single runtime. Rather than managing separate embedding services and vector databases, Spice handles the entire pipeline -- generating embeddings, storing vectors, and executing similarity search -- within the same system that handles your SQL queries.

This means you can:

- Generate embeddings using configured LLM models alongside your data queries, without external API orchestration

- Store and index embeddings alongside your relational data from 30+ connected sources

- Combine embedding-based vector search with BM25 full-text search in hybrid search queries

- Keep embeddings fresh as source data changes through real-time CDC

-- Generate embeddings and search in Spice

SELECT * FROM search(

'knowledge_base',

'how to reset API credentials',

mode => 'hybrid',

limit => 10

)The unified approach eliminates the typical architecture where embeddings are generated by one service, stored in a vector database, and queried separately from your relational data. Instead, embedding generation, storage, and search are co-located with your SQL federation layer.

Advanced Topics

Fine-Tuning Embeddings

General-purpose embedding models may not perform optimally on domain-specific data. Fine-tuning adapts a pre-trained embedding model to your domain using labeled pairs (similar and dissimilar examples from your data).

The most effective approach is contrastive fine-tuning: given anchor-positive-negative triplets, the model learns to pull positive pairs closer and push negative pairs apart in embedding space. Even small fine-tuning datasets (a few thousand pairs) can significantly improve retrieval quality on domain-specific queries. Libraries like sentence-transformers provide straightforward fine-tuning APIs.

The risk is overfitting -- a model fine-tuned too aggressively on a narrow domain may lose its general-purpose capabilities. Techniques like multi-task training (mixing domain-specific and general pairs) mitigate this.

Matryoshka Representations

Standard embeddings use a fixed dimension, but not all tasks require the full vector. Matryoshka Representation Learning (MRL) trains embedding models such that any prefix of the full embedding is itself a valid, lower-dimensional embedding.

For example, a 768-dimensional Matryoshka embedding can be truncated to 256 or 128 dimensions with graceful quality degradation. This enables adaptive precision -- use the full embedding for high-quality search and a truncated version for fast approximate filtering. OpenAI's text-embedding-3 models support this: you can request a 256-dimensional embedding from a model that natively produces 3072 dimensions.

Quantized Embeddings

Storing millions of float32 embedding vectors requires substantial memory. Quantization reduces storage and compute costs by representing each dimension with fewer bits:

- float32 (default): 4 bytes per dimension, full precision

- float16: 2 bytes per dimension, minimal quality loss

- int8: 1 byte per dimension, noticeable but often acceptable quality loss

- binary: 1 bit per dimension, significant quality loss but 32x compression

Binary quantization is particularly effective as a first-pass filter -- use binary similarity to quickly identify candidates, then re-rank using full-precision embeddings.

Chunking Strategies for Long Documents

Embedding models have a maximum context window (typically 512 tokens), so longer documents must be split into chunks before embedding. Chunking strategy significantly affects retrieval quality:

- Fixed-size chunks (e.g., 256 tokens with 50-token overlap): Simple and predictable, but may split sentences or paragraphs mid-thought.

- Semantic chunking: Split at paragraph or section boundaries to preserve coherent units of meaning. More complex to implement but produces higher-quality chunks.

- Recursive chunking: Start with large chunks and recursively split oversized chunks at the most natural boundary (paragraphs, then sentences, then words).

The overlap between adjacent chunks ensures that information at chunk boundaries is not lost. Typical overlap is 10-20% of the chunk size.

Embeddings FAQ

What is the difference between an embedding and a vector?

A vector is a mathematical object -- a list of numbers. An embedding is a specific kind of vector produced by a trained model to represent the semantic meaning of an input. All embeddings are vectors, but not all vectors are embeddings. The term "embedding" implies that the vector was learned to capture meaning in a way that preserves semantic relationships.

How many dimensions should my embeddings have?

It depends on your quality and performance requirements. Models with 384 dimensions (like all-MiniLM-L6-v2) are fast and memory-efficient, suitable for lightweight applications. Models with 768-1024 dimensions (like BGE-large or E5-large) offer a good balance of quality and efficiency. Models with 1536-3072 dimensions (like OpenAI text-embedding-3) provide the highest quality but require more storage and compute. Start with a mid-range model and evaluate whether higher dimensions improve results on your specific data.

Do I need to re-embed all my documents if I change embedding models?

Yes. Embeddings from different models exist in different vector spaces and are not comparable. If you switch from one embedding model to another, all documents must be re-embedded with the new model. This is one reason why embedding model selection is an important upfront decision -- migrating between models at scale can be time-consuming and costly.

How do embeddings handle multiple languages?

Multilingual embedding models (like Cohere embed-v3 or multilingual-e5-large) are trained on text from many languages simultaneously. These models map semantically similar content to nearby vectors regardless of language, so a query in English can match a document in French if the content is semantically similar. The quality of cross-lingual matching varies by language pair and model.

What is the relationship between embeddings and RAG?

Embeddings are the foundation of the retrieval step in retrieval-augmented generation (RAG). Documents are embedded and stored in a vector index. When a user asks a question, the query is embedded and the most similar document chunks are retrieved. These chunks are then passed as context to a language model, which generates an answer grounded in the retrieved information. Better embeddings lead to better retrieval, which leads to more accurate and relevant generated answers.

Learn more about embeddings

Technical guides on using embeddings for search, RAG, and AI applications in a unified runtime.

Spice Docs

Learn how Spice integrates embedding generation, vector search, and SQL federation in a single runtime.

A Developer's Guide to Understanding Spice.ai

A comprehensive technical guide to understanding the Spice.ai platform, its architecture, and how it unifies data and AI.

True Hybrid Search: Vector, Full-Text, and SQL in One Runtime

Build hybrid search without managing multiple systems. Query vectors, run full-text search, and execute SQL in one unified runtime.

See Spice in action

Walk through your use case with an engineer and see how Spice handles federation, acceleration, and AI integration for production workloads.

Talk to an engineer