What is BM25 Full-Text Search?

BM25 (Best Match 25) is the standard ranking function used by full-text search engines to score and rank documents by relevance to a query.

Full-text search is the foundation of information retrieval. When a user types a query, the search system must quickly find the most relevant documents from potentially millions of candidates and rank them in order of relevance. BM25 -- Best Match 25 -- is the ranking function that powers this process in virtually every modern search engine, from Elasticsearch and Apache Lucene to PostgreSQL's full-text search.

BM25 works by scoring each document based on three factors: how often query terms appear in the document (term frequency), how rare those terms are across the entire corpus (inverse document frequency), and how long the document is relative to the average (document length normalization). These three signals combine to produce a relevance score that is remarkably effective across a wide range of search tasks.

How BM25 Scores Documents

Term Frequency (TF)

The simplest relevance signal is how many times a query term appears in a document. A document mentioning "kubernetes" ten times is probably more relevant to a query about Kubernetes than one mentioning it once.

But raw term frequency has a problem: the tenth occurrence of a term adds less relevance than the first. BM25 addresses this with a saturation function -- term frequency contributes to the score with diminishing returns, controlled by the parameter k1 (typically set to 1.2). Higher k1 values allow term frequency to keep contributing longer before saturating.

Inverse Document Frequency (IDF)

Not all terms are equally informative. A search for "kubernetes deployment error" should weight "kubernetes" and "deployment" more heavily than "error," because "error" appears in many more documents and is less discriminating.

IDF measures term rarity across the corpus. Terms that appear in few documents get high IDF scores; terms that appear everywhere get low scores. BM25 uses a logarithmic IDF formula:

IDF(t) = log((N - df(t) + 0.5) / (df(t) + 0.5) + 1)

Where N is the total number of documents and df(t) is the number of documents containing term t.

Document Length Normalization

Longer documents naturally contain more term occurrences, but that doesn't make them more relevant. A 10,000-word document mentioning "kubernetes" five times is likely less focused on the topic than a 500-word document with the same count.

BM25 normalizes for document length using the parameter b (typically set to 0.75, range 0 to 1). When b = 1, full length normalization is applied -- longer documents are penalized proportionally. When b = 0, no normalization is applied. The normalization compares each document's length to the average document length in the corpus.

The BM25 Formula

Putting it together, the BM25 score for a document D given a query Q with terms q1, q2, ..., qn is:

BM25(D, Q) = sum(IDF(qi) * (tf(qi, D) * (k1 + 1)) / (tf(qi, D) + k1 * (1 - b + b * (|D| / avgdl))))

Where:

- tf(qi, D) is the frequency of term qi in document D

- |D| is the document length

- avgdl is the average document length across the corpus

- k1 controls term frequency saturation (default: 1.2)

- b controls document length normalization (default: 0.75)

How Inverted Indexes Power Full-Text Search

BM25 scoring is only half the story. The other half is the data structure that makes it possible to score documents quickly: the inverted index.

Tokenization

Before indexing, raw text is broken into tokens. The sentence "BM25 handles full-text search" might tokenize into: ["bm25", "handles", "full", "text", "search"]. Tokenization typically includes lowercasing, punctuation removal, and often stemming (reducing words to their root form -- "searching" becomes "search").

Posting Lists

The inverted index maps each unique token to a posting list -- a sorted list of document IDs where that token appears, along with term frequency and position information:

"kubernetes" → [(doc_3, tf=5), (doc_17, tf=2), (doc_42, tf=8), ...]

"deployment" → [(doc_3, tf=3), (doc_8, tf=1), (doc_17, tf=6), ...]

Query Execution

When a query arrives, the search engine:

- Tokenizes the query into terms

- Looks up the posting list for each term

- Intersects or unions the posting lists (depending on AND/OR semantics)

- Computes BM25 scores for candidate documents

- Returns the top-k documents sorted by score

This process is fast because posting lists are pre-computed at index time. A multi-term query only needs to scan the posting lists for its specific terms rather than every document in the corpus.

BM25 vs. TF-IDF

TF-IDF (Term Frequency -- Inverse Document Frequency) is the predecessor to BM25. Both use term frequency and inverse document frequency, but BM25 improves on TF-IDF in two important ways:

-

Term frequency saturation: TF-IDF uses raw or log-scaled term frequency, which continues to grow with more occurrences. BM25's saturation function ensures that additional occurrences of a term contribute diminishing marginal relevance, which better reflects how humans judge relevance.

-

Document length normalization: TF-IDF has no built-in mechanism for adjusting scores based on document length. BM25's b parameter provides a tunable normalization that penalizes longer documents appropriately.

These differences make BM25 consistently more effective in benchmarks and real-world search applications. TF-IDF is still used in some contexts (notably, as a feature in machine learning pipelines), but BM25 is the default choice for document ranking.

Full-Text Search in SQL Databases vs. Dedicated Search Engines

Full-text search is available in both SQL databases and dedicated search engines, with different tradeoffs:

SQL databases (PostgreSQL, MySQL) provide built-in full-text search using tsvector / tsquery (PostgreSQL) or MATCH ... AGAINST (MySQL). This is convenient -- no additional infrastructure -- but limited. SQL full-text search typically offers basic BM25-like scoring, limited tokenization options, and slower performance at scale compared to dedicated engines.

Dedicated search engines (Elasticsearch, Apache Solr, Meilisearch) are purpose-built for search. They provide advanced tokenization and analyzers, configurable BM25 parameters, faceting, highlighting, suggestions, and horizontal scaling through index sharding. The tradeoff is operational complexity -- another system to deploy, monitor, and keep in sync with your primary data store.

When BM25 Falls Short

BM25 is powerful but limited to lexical matching -- it can only find documents that contain the exact terms in the query. This creates a fundamental problem called vocabulary mismatch:

- "How do I cancel my subscription?" won't match a document titled "Account termination guide"

- "Fix slow database" won't match "Query performance optimization"

- "ML model serving" won't match "Machine learning inference deployment"

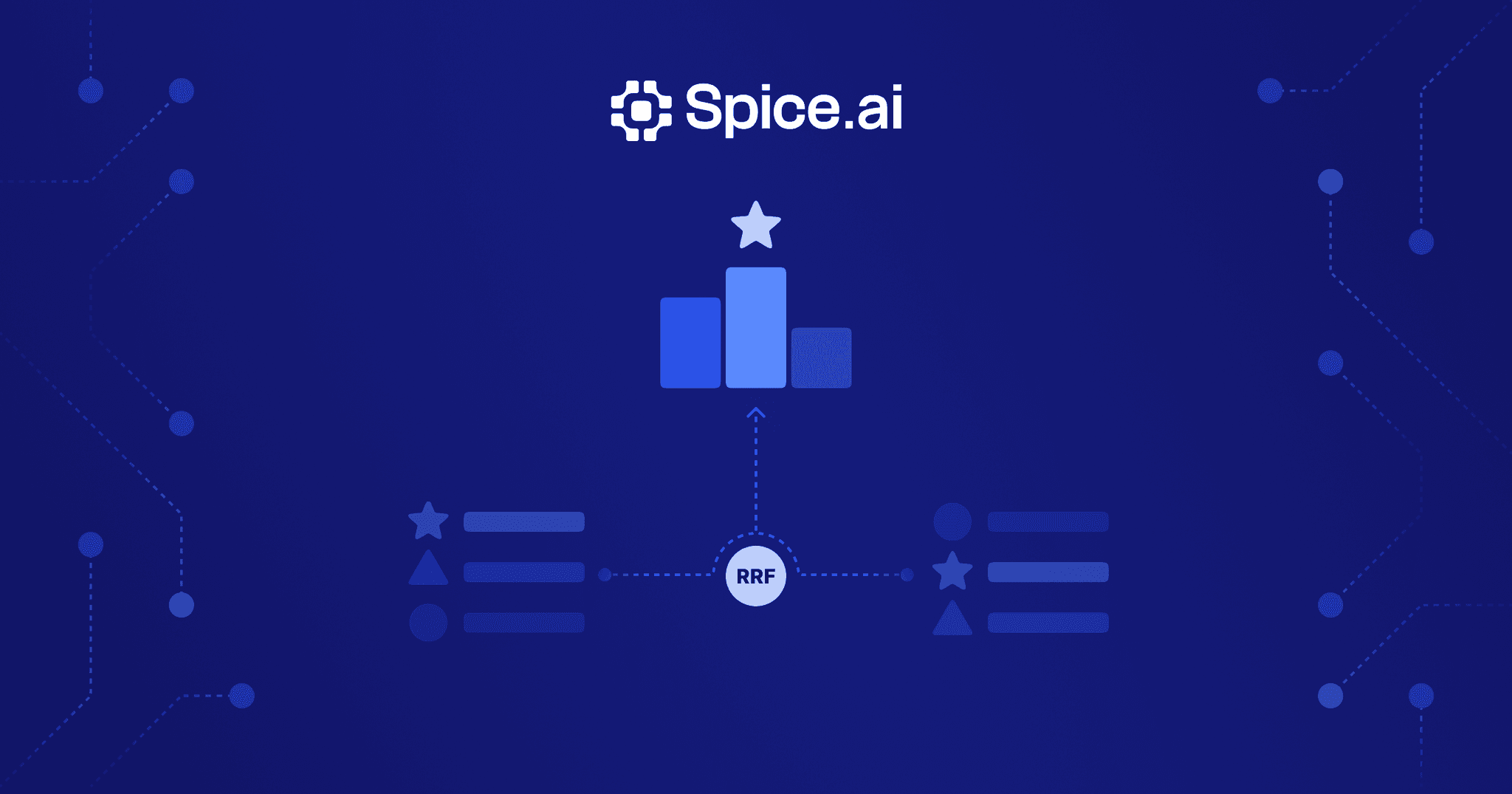

When vocabulary mismatch is a significant problem, consider hybrid search, which combines BM25 full-text search with vector search to capture both exact term matches and semantic similarity. Hybrid search uses embeddings to understand meaning, while BM25 handles the precise keyword matching that vector search misses.

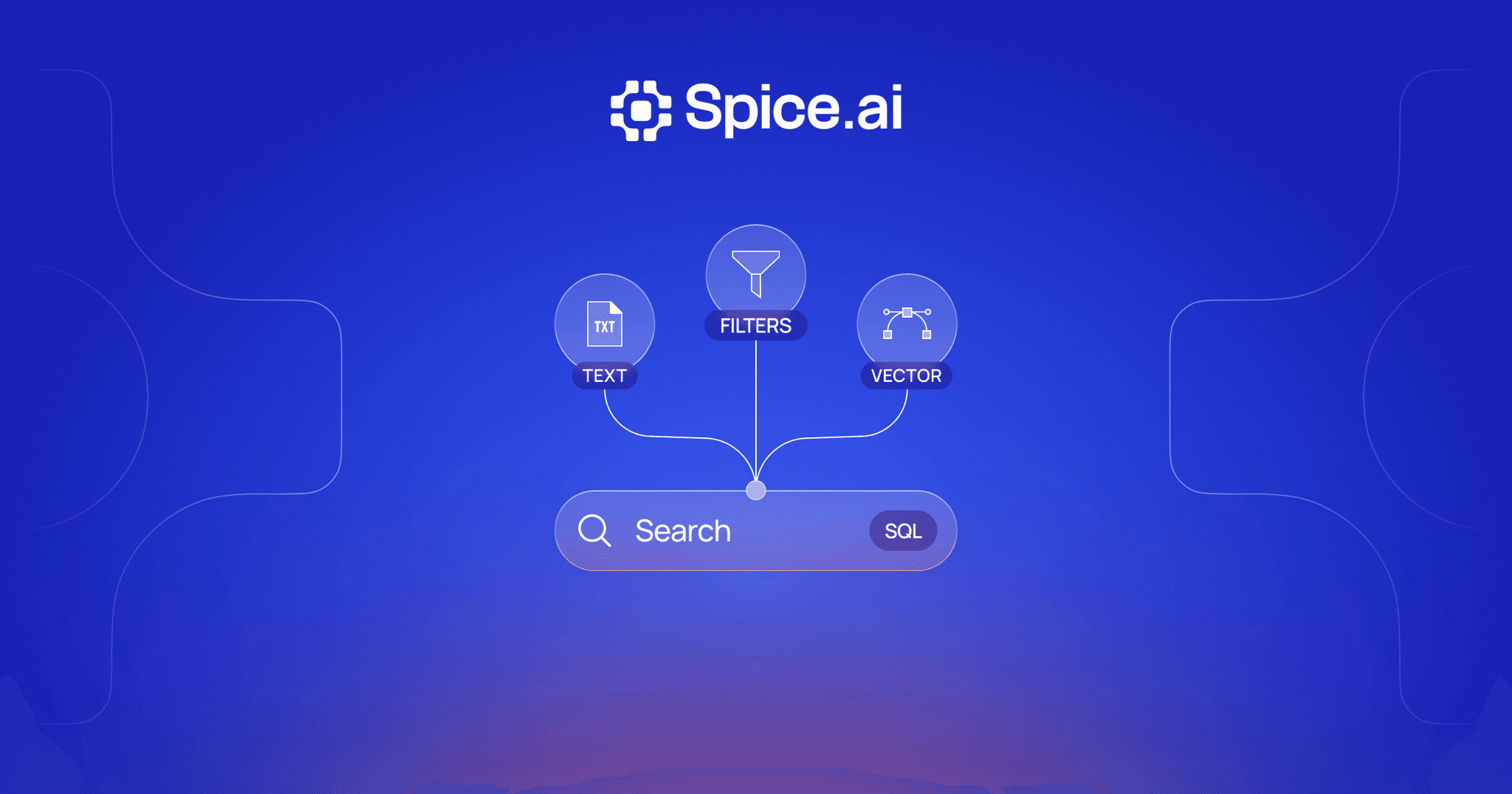

BM25 Full-Text Search with Spice

Spice integrates BM25 full-text search alongside vector search and SQL federation in a single unified runtime. Rather than deploying a separate search engine and keeping it synchronized with your data sources, Spice provides full-text search as a native query capability.

This means you can:

- Run BM25 full-text search across federated data from 30+ connected sources without maintaining separate search infrastructure

- Combine full-text search with vector similarity in hybrid search queries using built-in RRF fusion

- Use real-time CDC to keep search indexes fresh as source data changes

- Express search queries in standard SQL alongside your existing analytical and operational queries

-- Full-text search in Spice

SELECT * FROM search(

'knowledge_base',

'kubernetes deployment error',

mode => 'fts',

limit => 10

)The unified approach eliminates the data synchronization problem -- when source data changes, both full-text and vector indexes update through the same change data capture pipeline. This is especially valuable for RAG applications where stale search indexes lead to outdated or incorrect answers.

Advanced Topics

Stemming and Analyzers

Tokenization is more nuanced than splitting on whitespace. Analyzers are configurable pipelines that process text before indexing and at query time. A typical analyzer includes:

- Character filters: Normalize Unicode, strip HTML tags, or replace patterns

- Tokenizer: Split text into tokens (by whitespace, word boundaries, or n-grams)

- Token filters: Lowercase, remove stop words, apply stemming or lemmatization

Stemming reduces words to their root form so that "running," "runs," and "ran" all match the stem "run." Common stemmers include the Porter Stemmer (aggressive, fast) and the Snowball Stemmer (language-aware, more accurate). Stemming improves recall but can reduce precision -- "university" and "universe" might stem to the same root.

Index Sharding

For large corpora, a single inverted index becomes a bottleneck. Sharding splits the index across multiple nodes, where each shard holds a subset of documents. A query is broadcast to all shards, each returns its local top-k results, and a coordinator merges the results.

Sharding strategies include document-based sharding (each shard holds a random subset of documents) and term-based sharding (each shard holds posting lists for a subset of terms). Document-based sharding is more common because it distributes load evenly and allows each shard to compute local BM25 scores independently.

BM25F for Multi-Field Scoring

Documents often have multiple fields -- title, body, URL, metadata. A match in the title is typically more relevant than a match in the body. BM25F (BM25 with field weights) extends BM25 to handle multi-field documents by computing a weighted combination of term frequencies across fields before applying the BM25 formula.

For example, you might weight title matches 3x higher than body matches and URL matches 2x higher. BM25F computes an effective term frequency as tf_effective = w_title * tf_title + w_body * tf_body + w_url * tf_url, then applies the standard BM25 formula using this combined frequency. This produces better rankings than scoring each field independently and combining scores, because the saturation function is applied to the combined frequency rather than to each field separately.

BM25 Full-Text Search FAQ

What does BM25 stand for?

BM25 stands for Best Match 25. It is the 25th iteration of a series of ranking functions developed by Stephen Robertson and Karen Sparck Jones as part of the Okapi information retrieval system in the 1990s. It has become the de facto standard ranking function for full-text search.

What are the default BM25 parameters?

The two main BM25 parameters are k1 and b. The typical defaults are k1 = 1.2 (controls term frequency saturation -- higher values allow term frequency to contribute more) and b = 0.75 (controls document length normalization -- higher values penalize longer documents more). These defaults work well for most use cases, but tuning them on your specific data can improve results.

How does BM25 differ from TF-IDF?

BM25 improves on TF-IDF in two key ways: it applies a saturation function to term frequency (so the 10th occurrence of a term adds less relevance than the first), and it normalizes for document length (so longer documents are not unfairly favored). These improvements make BM25 consistently more effective for document ranking in practice.

When should I use BM25 vs. vector search?

Use BM25 when queries involve exact terms, identifiers, error codes, or technical terminology that must be matched precisely. Use vector search when queries are conceptual and vocabulary mismatch is likely. For most production systems, hybrid search -- combining BM25 and vector search -- delivers the best results by capturing both exact matches and semantic similarity.

Can BM25 handle multi-language search?

Yes, but it requires language-specific configuration. Each language needs appropriate tokenization rules, stop word lists, and stemming algorithms. Most search engines support multiple language analyzers that can be applied per-field or per-index. For multilingual corpora, you can either maintain separate indexes per language or use a language-detection step to route queries to the correct analyzer.

Learn more about full-text search

Technical guides on building full-text and hybrid search with BM25, vector similarity, and SQL in a single runtime.

Search Docs

Learn how Spice provides full-text, semantic, and hybrid search capabilities in a single SQL-native runtime.

True Hybrid Search: Vector, Full-Text, and SQL in One Runtime

Build hybrid search without managing multiple systems. Query vectors, run full-text search, and execute SQL in one unified runtime.

Real-Time Hybrid Search Using RRF: A Hands-On Guide with Spice

Learn how to build hybrid search with RRF directly in SQL using Spice -- combining text, vector, and time-based relevance in one query.

See Spice in action

Walk through your use case with an engineer and see how Spice handles federation, acceleration, and AI integration for production workloads.

Talk to an engineer