What is Retrieval Augmented Generation?

Retrieval augmented generation (RAG) is an AI architecture pattern that improves large language model (LLM) responses by retrieving relevant data from external sources and including it in the prompt at inference time.

Large language models are trained on massive text corpora, but that training data has a cutoff date and doesn't include an organization's private data. When you ask an LLM a question about your company's products, internal policies, or recent events, it either guesses (hallucinating a plausible-sounding answer) or admits it doesn't know.

Retrieval augmented generation solves this by adding a retrieval step before generation. Instead of relying solely on knowledge baked into model weights, RAG retrieves relevant documents, database records, or API responses and injects them into the LLM's context window. The model generates its answer grounded in this specific, current data -- dramatically reducing hallucinations and enabling domain-specific, accurate responses.

How RAG Works

A RAG system operates in three stages: indexing source data ahead of time, retrieving relevant context at query time, and augmenting the LLM prompt with that context before generation.

Stage 1: Indexing

Before RAG can retrieve anything, source data must be indexed. This involves:

- Collecting source data from databases, knowledge bases, document stores, APIs, and file systems

- Chunking long documents into smaller segments (typically 256-1024 tokens) that fit within an LLM's context window

- Embedding each chunk into a vector representation using an embedding model (e.g., OpenAI's text-embedding-3, Cohere Embed, or open-source alternatives)

- Storing the vectors alongside the original text in a vector index or database

This indexing step runs ahead of time and must be refreshed as source data changes. Stale indexes are one of the most common failure modes in production RAG systems -- the retriever returns outdated context, and the LLM generates outdated answers.

Stage 2: Retrieval

When a user query arrives:

- The query is embedded using the same embedding model used during indexing

- The query vector is compared against the index to find the most semantically similar chunks (typically using cosine similarity or dot product)

- The top-k most relevant chunks are returned as candidate context

In practice, pure vector search often isn't enough. Hybrid search -- combining vector similarity with keyword (BM25) matching -- significantly improves retrieval quality. Vector search captures semantic meaning ("What are your refund terms?" matches "return policy"), while keyword search catches exact terms that vector search might miss (product names, error codes, technical identifiers).

The quality of retrieval is the single most important factor in RAG performance. If the retriever returns irrelevant chunks, the LLM generates poor answers regardless of how capable the model is. Improving retrieval quality -- through better chunking, hybrid search, re-ranking, and metadata filtering -- typically has a larger impact than switching to a more powerful LLM.

Stage 3: Augmented Generation

The retrieved chunks are assembled into the LLM prompt as context, typically before the user's question:

Context:

[Retrieved chunk 1]

[Retrieved chunk 2]

[Retrieved chunk 3]

User question: What is the refund policy for enterprise plans?

Answer based on the context above:

The LLM generates a response grounded in this specific, retrieved data. Because the model has the actual source material in its context, it can provide accurate, specific answers with citations traceable back to source documents.

RAG vs. Fine-Tuning

RAG and fine-tuning are the two main approaches to customizing LLM behavior with domain-specific knowledge. They solve different problems and are often combined.

Fine-tuning modifies a model's weights by training on domain-specific data. This permanently embeds knowledge and behavior patterns into the model. Fine-tuning is effective for changing the model's tone, style, or reasoning patterns -- for example, training it to respond like a technical support agent or to follow a specific output format.

RAG retrieves knowledge at inference time without modifying the model. This is effective for injecting factual, frequently changing information -- product documentation, internal policies, customer data, real-time metrics.

The key tradeoff is maintenance cost vs. flexibility:

- Fine-tuning is expensive (GPU hours, labeled data) and slow to update. When information changes, the model must be retrained.

- RAG is cheap to update -- new knowledge becomes available as soon as it's indexed. But it depends on retrieval quality and is bounded by context window size.

Most production systems use both: fine-tuning for behavior and style, RAG for factual knowledge.

Production RAG Challenges

The gap between a RAG prototype and a production RAG system is significant. Several data infrastructure challenges determine whether the system is reliable enough for real users.

Data Freshness

If your vector index is rebuilt nightly, every answer is at least a day stale. For many use cases -- customer support, compliance queries, operational dashboards -- this staleness is unacceptable.

Production RAG systems need real-time or near-real-time indexing. This typically involves change data capture (CDC) to detect when source data changes and incrementally update the vector index. Without CDC, teams resort to periodic full re-indexing, which is slow and expensive at scale.

Retrieval Quality

Poor retrieval is the most common reason RAG systems underperform. Common failure modes include:

- Chunking too aggressively: Important context is split across chunks, so no single chunk contains enough information

- Missing keyword matches: Pure vector search misses exact terms (product names, error codes) that users search for

- Irrelevant results: The retriever returns semantically similar but factually irrelevant content

- Missing metadata filters: Queries that should be scoped (e.g., "2026 pricing") retrieve content from all time periods

Hybrid search -- combining vector similarity with BM25 keyword matching -- addresses several of these failures. Re-ranking models (e.g., Cohere Rerank, cross-encoders) can further improve precision by scoring retrieved chunks against the query using a more expensive model.

Multi-Source Federation

Enterprise data doesn't live in a single database. Customer records are in PostgreSQL, product documentation is in Confluence, support tickets are in Zendesk, and financial data is in Snowflake. A production RAG system needs to retrieve from all of these sources.

Building a separate retrieval pipeline for each source is fragile and doesn't scale. SQL federation provides a unified query interface across all sources, so the RAG system can retrieve structured data from any connected system alongside vector search results.

Observability and Evaluation

Production RAG systems need monitoring to detect quality degradation:

- Retrieval metrics: Are the retrieved chunks relevant? How often does the retriever return empty or low-confidence results?

- Generation metrics: Are the LLM's answers faithful to the retrieved context, or is it hallucinating beyond what the context supports?

- End-to-end metrics: Are users finding the answers helpful? What's the failure rate?

Without observability, RAG quality degrades silently as source data changes, retrieval patterns shift, or index staleness increases.

Common RAG Use Cases

Customer Support and Knowledge Base Q&A

Connect an LLM to product documentation, FAQ articles, and support ticket history. Users ask natural language questions and receive accurate, cited answers drawn from authoritative sources -- reducing support ticket volume and improving resolution time.

Internal Enterprise Search

Employees search across internal wikis, policies, engineering docs, and Slack history using natural language. RAG provides answers with citations, not just a list of matching documents.

Code Assistance and Developer Tools

AI coding assistants use RAG to ground suggestions in the actual codebase, API documentation, and project-specific patterns. This dramatically reduces incorrect or hallucinated code suggestions.

Compliance and Regulatory Queries

Legal and compliance teams query regulatory documents, internal policies, and audit records. RAG ensures answers are traceable to specific source documents -- critical for regulatory compliance.

RAG with Spice

Spice provides the data infrastructure layer that production RAG systems require:

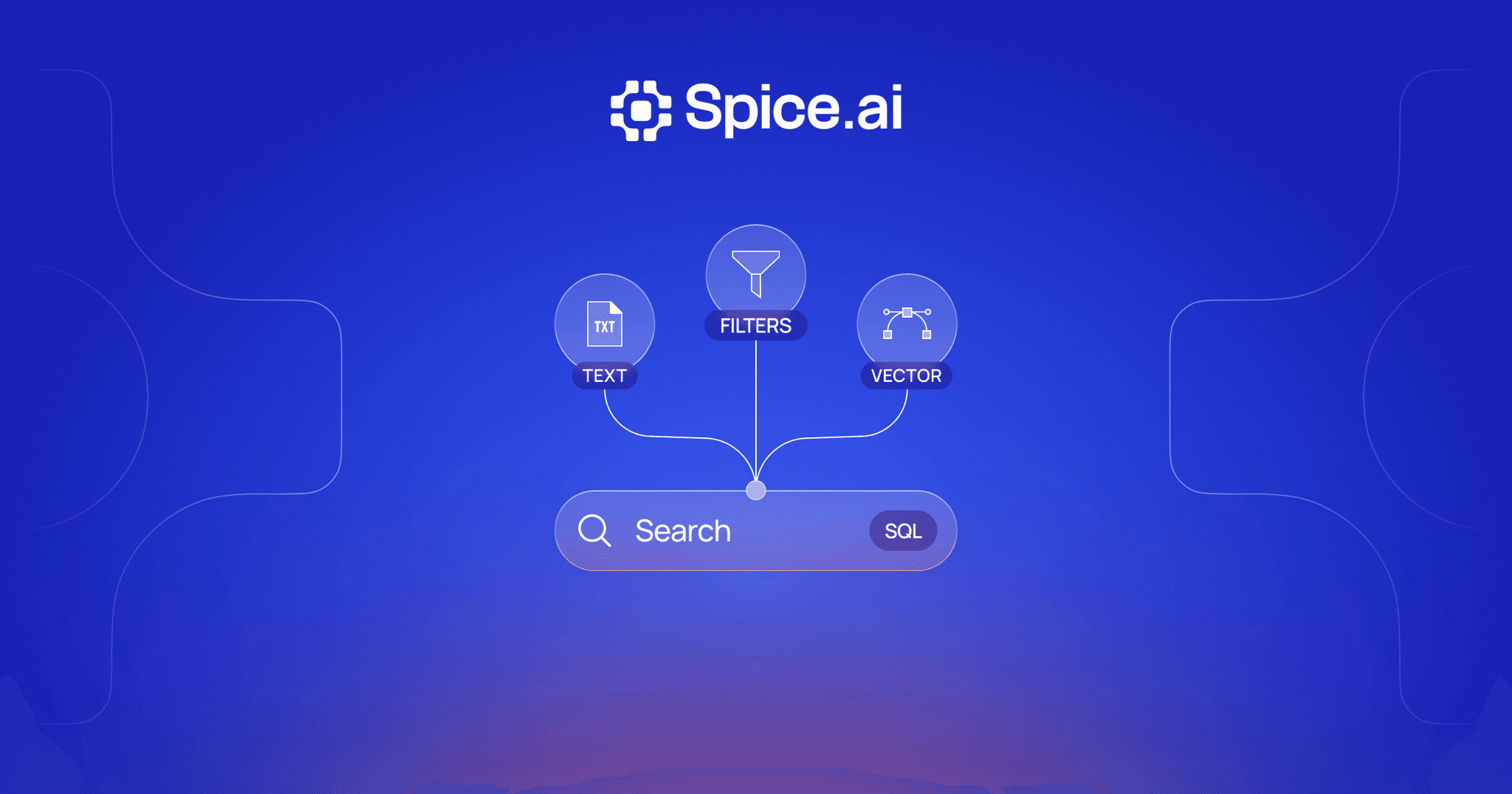

- Hybrid search combining vector, full-text, and SQL retrieval in a single query

- SQL federation for retrieving structured data from 30+ connected sources alongside vector search results

- Real-time CDC to keep vector indexes and acceleration caches fresh as source data changes

- LLM inference for running embedding and generation models alongside data queries

This unified runtime means RAG applications can index, retrieve, and generate in a single system instead of stitching together separate vector databases, search engines, and data pipelines.

Advanced Topics

The Full RAG Pipeline

A production RAG pipeline involves more stages than the basic three-step model suggests. Between the user's query and the final generated response, multiple processing and refinement steps determine answer quality.

Understanding each stage -- and where quality breaks down -- is essential for debugging and improving RAG systems in production.

Chunking Strategies

How source documents are split into chunks has an outsized impact on retrieval quality. The simplest approach -- splitting on a fixed token count -- often breaks mid-sentence or separates a question from its answer.

Recursive character splitting divides text hierarchically: first by section headers, then by paragraphs, then by sentences. This preserves semantic boundaries better than fixed-size splits. Semantic chunking goes further by using an embedding model to detect topic shifts and placing chunk boundaries where the semantic similarity between adjacent sentences drops. This produces chunks that are coherent units of meaning rather than arbitrary slices.

Parent-child chunking (also called small-to-big retrieval) indexes small chunks for retrieval precision but returns the surrounding parent chunk for generation context. The retriever matches on a focused passage, but the LLM receives enough surrounding context to generate a complete answer. This balances retrieval precision against generation context -- a tradeoff that single-level chunking cannot address.

Chunk overlap -- including 10-20% of the previous chunk at the start of each new chunk -- helps preserve context at boundaries but increases index size. The optimal overlap depends on the nature of the source material.

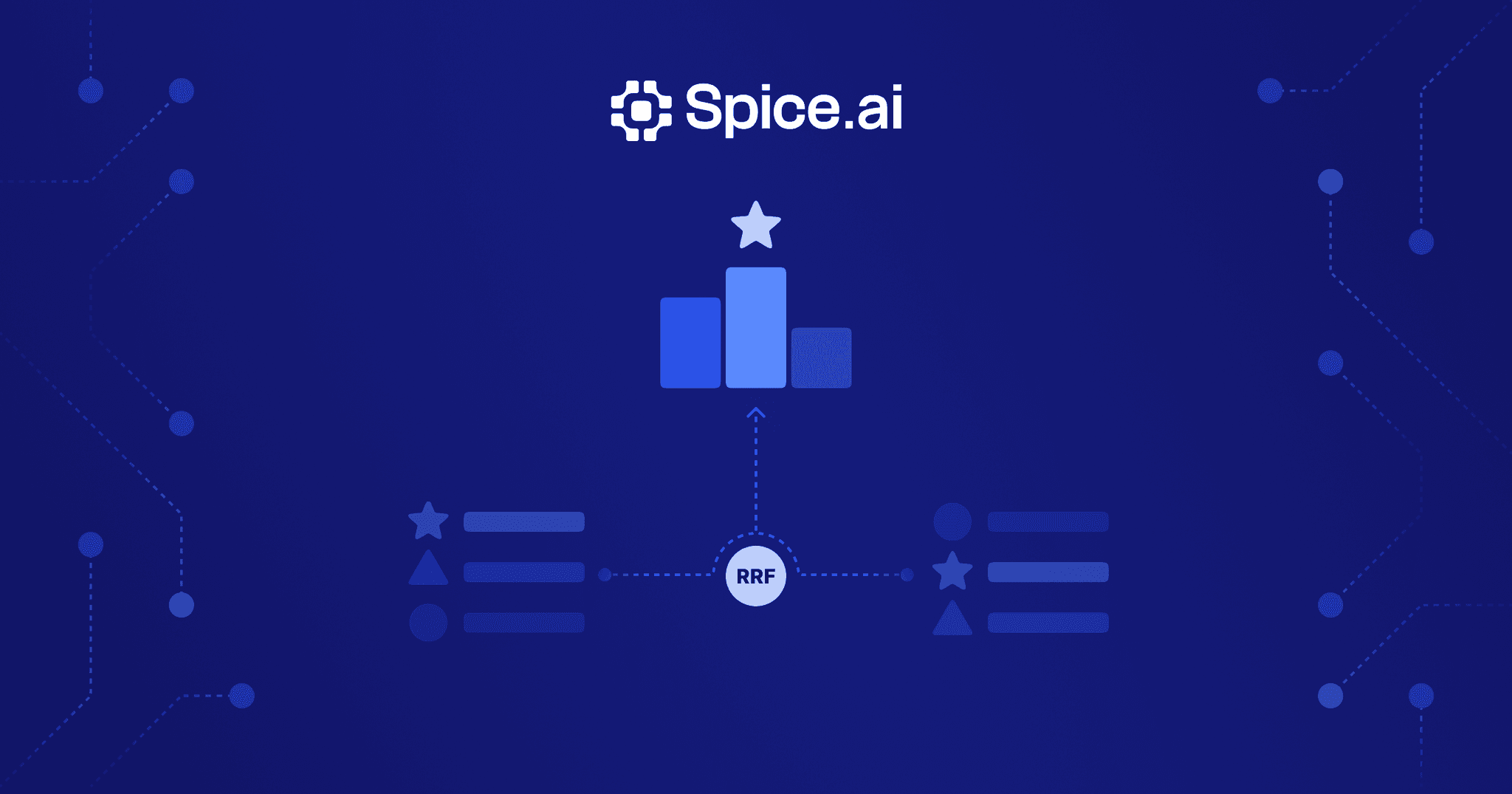

Re-ranking

Initial retrieval (whether vector, keyword, or hybrid) uses a bi-encoder model that embeds the query and documents independently. This is fast but imprecise -- the query and document never directly attend to each other.

Cross-encoder re-rankers score each candidate by processing the query and document together through a single model, allowing full cross-attention between them. This produces significantly more accurate relevance scores but is too expensive to run against the full index. The standard pattern is to retrieve a larger candidate set (e.g., top-50 from initial retrieval) and re-rank to the final top-k (e.g., top-5).

Re-ranking is one of the highest-impact improvements for RAG quality. In benchmarks, adding a cross-encoder re-ranker to a hybrid retrieval pipeline typically improves answer accuracy by 10-20% without changing any other component.

Multi-Hop Retrieval

Some questions cannot be answered from a single retrieved passage. "How does our enterprise pricing compare to competitors mentioned in Q4 analyst reports?" requires first finding the analyst reports, then extracting competitor mentions, then retrieving pricing data for each competitor.

Multi-hop retrieval decomposes complex queries into sub-queries, retrieves context for each, and chains the results. The LLM generates intermediate queries based on partial results, retrieves additional context, and synthesizes across all retrieved information. This is more complex than single-shot retrieval -- it requires the LLM to plan a retrieval strategy -- but it's necessary for questions that span multiple documents or require reasoning across disparate data sources.

Frameworks for multi-hop retrieval include iterative retrieval (retrieve, reason, retrieve again) and graph-based retrieval (following entity relationships across a knowledge graph to gather connected context). Both patterns increase latency but enable the system to answer questions that would otherwise require multiple user interactions.

RAG FAQ

What is the difference between RAG and fine-tuning?

Fine-tuning modifies a model's weights by training on domain-specific data, permanently embedding that knowledge. RAG retrieves relevant data at inference time and injects it into the prompt. Fine-tuning is better for changing style or behavior; RAG is better for injecting factual, frequently changing knowledge. Most production systems combine both.

What types of data can RAG retrieve from?

RAG can retrieve from any data source that can be indexed: relational databases, document stores, vector databases, APIs, PDFs, wikis, and file systems. SQL-based retrieval -- querying structured data directly -- is an emerging pattern that complements vector search for structured enterprise data.

Does RAG eliminate hallucinations completely?

No. RAG significantly reduces hallucinations by grounding responses in retrieved data, but the model can still misinterpret context or generate plausible but incorrect inferences. Proper evaluation, chunk quality monitoring, and response validation remain important in production systems.

What is hybrid search in the context of RAG?

Hybrid search combines vector similarity search (finding semantically similar content) with keyword search (exact term matching). This improves retrieval quality because vector search alone can miss exact terms while keyword search alone misses semantic meaning. Combining both methods is especially important for technical and domain-specific queries.

How does RAG handle large-scale enterprise data?

Enterprise RAG requires a data infrastructure layer that can federate queries across multiple sources, cache frequently accessed data for low-latency retrieval, and keep indexes fresh through real-time synchronization. Spice provides SQL federation, query acceleration, and hybrid search in a single runtime purpose-built for these requirements.

Learn more about RAG

Technical guides and blog posts on building production RAG systems with hybrid search and real-time data.

Hybrid Search Docs

Learn how Spice provides semantic, full-text, and hybrid search capabilities for RAG applications.

True Hybrid Search: Vector, Full-Text, and SQL in One Runtime

Build hybrid search without managing multiple systems. Query vectors, run full-text search, and execute SQL in one unified runtime.

Real-Time Hybrid Search Using RRF: A Hands-On Guide with Spice

Learn how to build hybrid search with Reciprocal Rank Fusion (RRF) directly in SQL using Spice -- combining text, vector, and time-based relevance in one query.

See Spice in action

Walk through your use case with an engineer and see how Spice handles federation, acceleration, and AI integration for production workloads.

Talk to an engineer