What is Hybrid Search?

Hybrid search combines vector similarity search with keyword (lexical) search to deliver more accurate, comprehensive results than either method alone.

Search has evolved beyond simple keyword matching. Vector search uses embeddings to find semantically similar content -- "refund policy" matches "return process" even though they share no words. But vector search has blind spots: it can miss exact product names, error codes, or technical identifiers that keyword search handles effortlessly.

Hybrid search solves this by running both methods in parallel and combining their results. A user query is simultaneously matched against a vector index (for semantic relevance) and a keyword index (for exact term matching). The results are merged using a ranking algorithm that balances both signals, producing a final result set that captures both meaning and precision.

Why Pure Vector Search Falls Short

Vector search (also called semantic search) encodes text into high-dimensional vectors using an embedding model and finds the closest vectors to the query. This captures meaning well -- "How do I cancel my subscription?" matches content about "account termination procedures."

But vector search struggles with:

- Exact identifiers: Product names, model numbers, error codes, and acronyms may not embed well. Searching for "ERR-4502" might return results about errors in general rather than the specific error code.

- Rare or technical terms: Domain-specific jargon, newly coined terms, or proper nouns that weren't well-represented in the embedding model's training data produce weak vectors.

- Precision at the tail: For broad queries, vector search returns semantically related but not precisely relevant results. The top results are good, but quality drops quickly.

Why Pure Keyword Search Falls Short

Keyword search (BM25, TF-IDF) matches documents that contain the query's exact terms, weighted by frequency and rarity. This is precise for exact matches but misses semantic equivalents:

- Synonym blindness: "automobile insurance" won't match content about "car coverage" despite identical meaning.

- Intent misunderstanding: "How to speed up queries" won't match content titled "Query Performance Optimization" because the terms differ.

- Vocabulary mismatch: Users describe problems in their own words, which often don't match the terminology in documentation or knowledge bases.

How Hybrid Search Works

A hybrid search system operates in three stages: parallel retrieval, score normalization, and result fusion.

Parallel Retrieval

The query is processed by both search systems simultaneously:

- Vector search: The query is embedded into a vector and matched against the vector index using cosine similarity or dot product. Returns the top-k most semantically similar documents with similarity scores.

- Keyword search: The query is tokenized and matched against the inverted index using BM25 scoring. Returns the top-k documents containing the most relevant keyword matches.

These two retrievals are independent and can execute concurrently, so hybrid search doesn't add meaningful latency over running either method alone.

Score Normalization

Vector similarity scores and BM25 scores are on different scales. Cosine similarity ranges from -1 to 1, while BM25 scores are unbounded positive numbers. Before combining results, scores must be normalized to a common scale.

Common normalization approaches include min-max normalization (scaling to 0-1 range within each result set) and z-score normalization (centering on mean with unit standard deviation).

Result Fusion

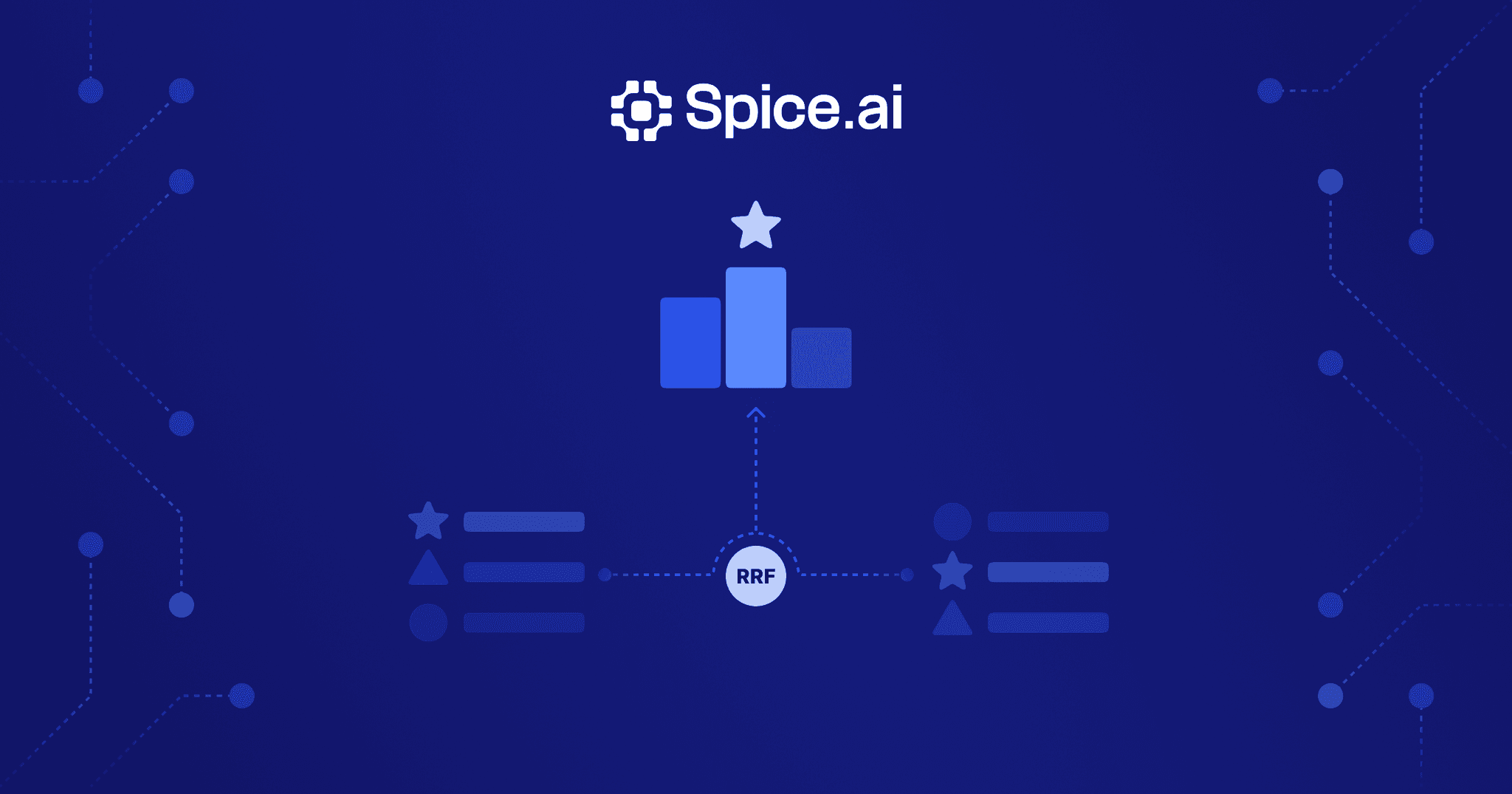

The normalized results are combined using a ranking algorithm. The most common approach is Reciprocal Rank Fusion (RRF), which works by:

- Assigning each result a score based on its rank position in each result set:

1 / (k + rank) - Summing scores for documents that appear in multiple result sets

- Sorting by the combined score

The key insight behind RRF is that it doesn't rely on raw scores at all -- only rank positions. This makes it robust to score distribution differences between search methods.

-- Hybrid search using rrf() in Spice

SELECT id, title, content, fused_score

FROM rrf(

vector_search(customer_docs, 'how to cancel subscription'),

text_search(customer_docs, 'cancel subscription', content),

join_key => 'id'

)

ORDER BY fused_score DESC

LIMIT 10;Other fusion methods include:

- Weighted linear combination:

score = alpha * vector_score + (1 - alpha) * keyword_score, where alpha controls the balance - Cross-encoder re-ranking: A more expensive model re-scores the merged candidates for higher precision

- Learned fusion: A trained model determines optimal weights per query type

Hybrid Search for RAG

Hybrid search is especially important for retrieval-augmented generation (RAG) systems, where retrieval quality directly determines answer quality.

RAG applications face a unique challenge: the retrieved context must be both semantically relevant (the right topic) and factually precise (the right details). Pure vector search might retrieve content about the right topic but miss the specific document containing the answer. Pure keyword search might find exact term matches in irrelevant contexts.

Hybrid search addresses both failure modes:

- Semantic recall: Vector search ensures the retriever captures conceptually related content even when the user's language differs from the source material

- Precision grounding: Keyword search ensures exact terms, names, and identifiers are matched, preventing the retriever from drifting to related-but-wrong content

In practice, RAG systems using hybrid search show measurably higher answer accuracy than those using either search method alone, especially for technical and domain-specific queries where vocabulary mismatch is common.

Hybrid Search vs. Separate Search Systems

Many teams build hybrid search by stitching together separate systems -- a vector database (Pinecone, Weaviate, Qdrant) alongside a search engine (Elasticsearch, OpenSearch). This approach works but introduces operational complexity:

- Two systems to deploy and manage: Separate infrastructure, monitoring, and scaling for each

- Data synchronization: Both systems must index the same data, and changes must propagate to both

- Application-layer fusion: The application must query both systems, normalize scores, and merge results

- Latency overhead: Two network round-trips instead of one

A unified runtime that supports vector search, keyword search, and SQL in a single system eliminates these problems. The data is indexed once, queries execute in a single round-trip, and fusion happens internally without application code.

Hybrid Search with Spice

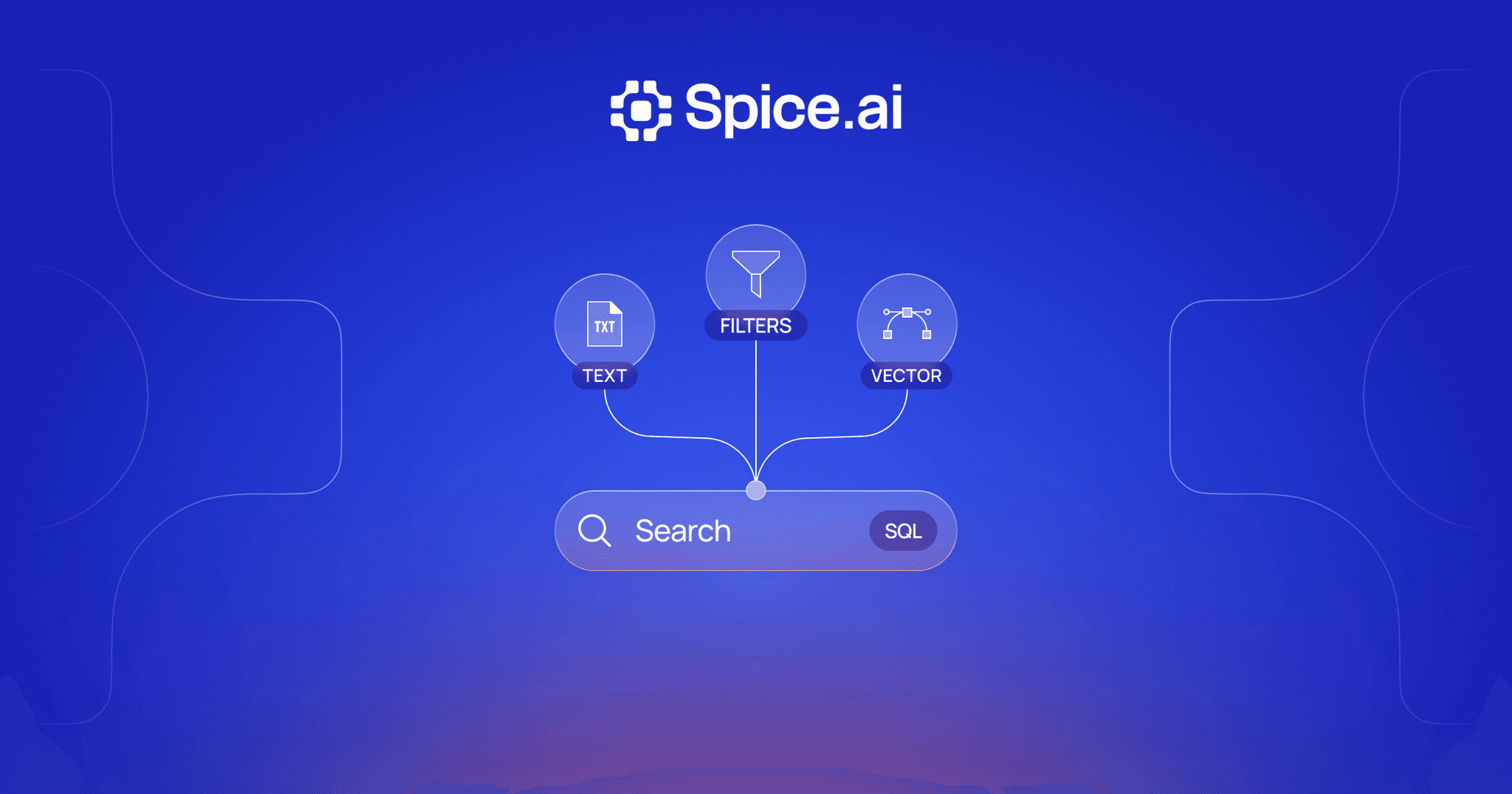

Spice provides hybrid search natively in a single runtime:

- Vector, full-text, and SQL search combined in one query engine -- no separate systems to manage

- Built-in RRF and weighted fusion for combining vector and keyword results

- SQL federation for searching across 30+ connected data sources

- Real-time CDC to keep search indexes fresh as source data changes

- LLM inference for generating embeddings alongside search queries in the same runtime

This unified approach means RAG applications can index, search, and generate in one system rather than orchestrating separate vector databases, search engines, and data pipelines.

Advanced Topics

Learned Sparse Representations (SPLADE)

Traditional keyword search relies on exact term matching with BM25 scoring. SPLADE (Sparse Lexical and Expansion) models improve on this by learning sparse representations that include term expansion -- a trained model predicts which vocabulary terms are relevant to a passage even if they don't appear in the text.

For example, a passage about "automobile insurance premiums" would receive non-zero weights for related terms like "car," "vehicle," "coverage," and "policy." This means SPLADE captures some semantic understanding within a sparse representation, bridging the gap between keyword and vector search.

The practical benefit is that SPLADE models can replace or augment BM25 in the keyword leg of a hybrid search pipeline. Because the output is still a sparse vector, it uses the same inverted index infrastructure as BM25 -- no separate vector database required. SPLADE representations are typically more expensive to compute than BM25 scores but cheaper than dense embeddings, making them an attractive middle ground.

In hybrid search systems, replacing BM25 with a SPLADE model improves recall on queries where vocabulary mismatch is an issue, while maintaining the precision advantages of sparse representations for exact-match queries. The tradeoff is additional indexing compute and the need to train or fine-tune the SPLADE model on domain-specific data for optimal results.

Cross-Encoder Re-ranking

The initial retrieval stage of hybrid search -- whether BM25, vector, or both -- uses models that encode the query and documents independently. This is efficient (each document embedding is computed once at index time) but limits how precisely the system can assess relevance.

Cross-encoder re-ranking addresses this by scoring each candidate document against the query in a single forward pass through a transformer model. The query and document tokens attend to each other directly, producing a more nuanced relevance score. Because this is computationally expensive (each query-document pair requires a full model inference), cross-encoders are applied only to the top candidates returned by the initial retrieval stage -- typically re-scoring the top 50-100 results to produce the final top-k.

The re-ranking stage typically adds 50-200ms of latency depending on the number of candidates and the model size. In practice, this is an acceptable tradeoff for applications like retrieval-augmented generation where retrieval precision directly determines answer quality. Models like Cohere Rerank, ColBERT, and open-source cross-encoders from the sentence-transformers library are commonly used.

Multi-Stage Retrieval Pipelines

Production search systems often use more than two stages. A common architecture is:

- Candidate generation: BM25 or a fast approximate nearest neighbor (ANN) search retrieves a broad set of candidates (hundreds to thousands) with high recall but moderate precision.

- First-pass ranking: A lightweight model (e.g., a small bi-encoder or SPLADE) re-scores candidates to reduce the set to a manageable size (50-100).

- Second-pass re-ranking: A cross-encoder re-ranks the reduced candidate set for maximum precision, producing the final top-k results.

Each stage narrows the candidate set while applying progressively more expensive (and more accurate) scoring. This cascade design balances latency and quality: the cheap first stage ensures nothing important is missed, while the expensive final stage ensures the top results are maximally relevant.

Tuning a multi-stage pipeline requires optimizing each stage independently. The candidate generation stage must have high recall (retrieve all potentially relevant documents), even at the cost of lower precision. The re-ranking stages must have high precision (correctly rank the most relevant documents at the top). Metrics like recall@100 for the first stage and NDCG@10 for the final stage are standard benchmarks.

Hybrid Search FAQ

What is Reciprocal Rank Fusion (RRF)?

RRF is a ranking algorithm that merges results from multiple search methods by scoring each document based on its rank position (not its raw score) in each result set. Documents appearing near the top of multiple result sets receive the highest combined scores. RRF is popular because it is simple, effective, and doesn't require score normalization. For a deeper explanation, see What is Reciprocal Rank Fusion (RRF)?

Does hybrid search add latency compared to vector search alone?

Minimal. The vector and keyword searches execute in parallel, so the total latency is roughly the maximum of the two rather than the sum. In a unified runtime where both indexes are co-located, hybrid search typically adds only a few milliseconds for the fusion step.

When should I use hybrid search instead of pure vector search?

Use hybrid search when your queries involve exact identifiers (product names, error codes, IDs), domain-specific terminology, or when retrieval precision matters more than just semantic similarity. In practice, hybrid search outperforms pure vector search for most production use cases, especially in RAG systems and enterprise search.

How do I tune the balance between vector and keyword results?

With RRF, the balance is determined by the k parameter (typically 60). With weighted linear combination, adjust the alpha weight between 0 (all keyword) and 1 (all vector). Start with equal weighting and tune based on evaluation metrics. The optimal balance depends on your data and query patterns.

Can hybrid search work with SQL queries?

Yes. In systems like Spice, hybrid search is expressed as SQL -- you can combine vector similarity, keyword matching, and structured SQL filters in a single query. This is especially powerful for filtering search results by metadata (date ranges, categories, access permissions) alongside semantic and keyword matching.

Learn more about hybrid search

Technical guides on building hybrid search with vector, full-text, and SQL in a single runtime.

Hybrid Search Docs

Learn how Spice provides semantic, full-text, and hybrid search capabilities in a single SQL-native runtime.

True Hybrid Search: Vector, Full-Text, and SQL in One Runtime

Build hybrid search without managing multiple systems. Query vectors, run full-text search, and execute SQL in one unified runtime.

Real-Time Hybrid Search Using RRF: A Hands-On Guide with Spice

Learn how to build hybrid search with RRF directly in SQL using Spice -- combining text, vector, and time-based relevance in one query.

See Spice in action

Walk through your use case with an engineer and see how Spice handles federation, acceleration, and AI integration for production workloads.

Talk to an engineer