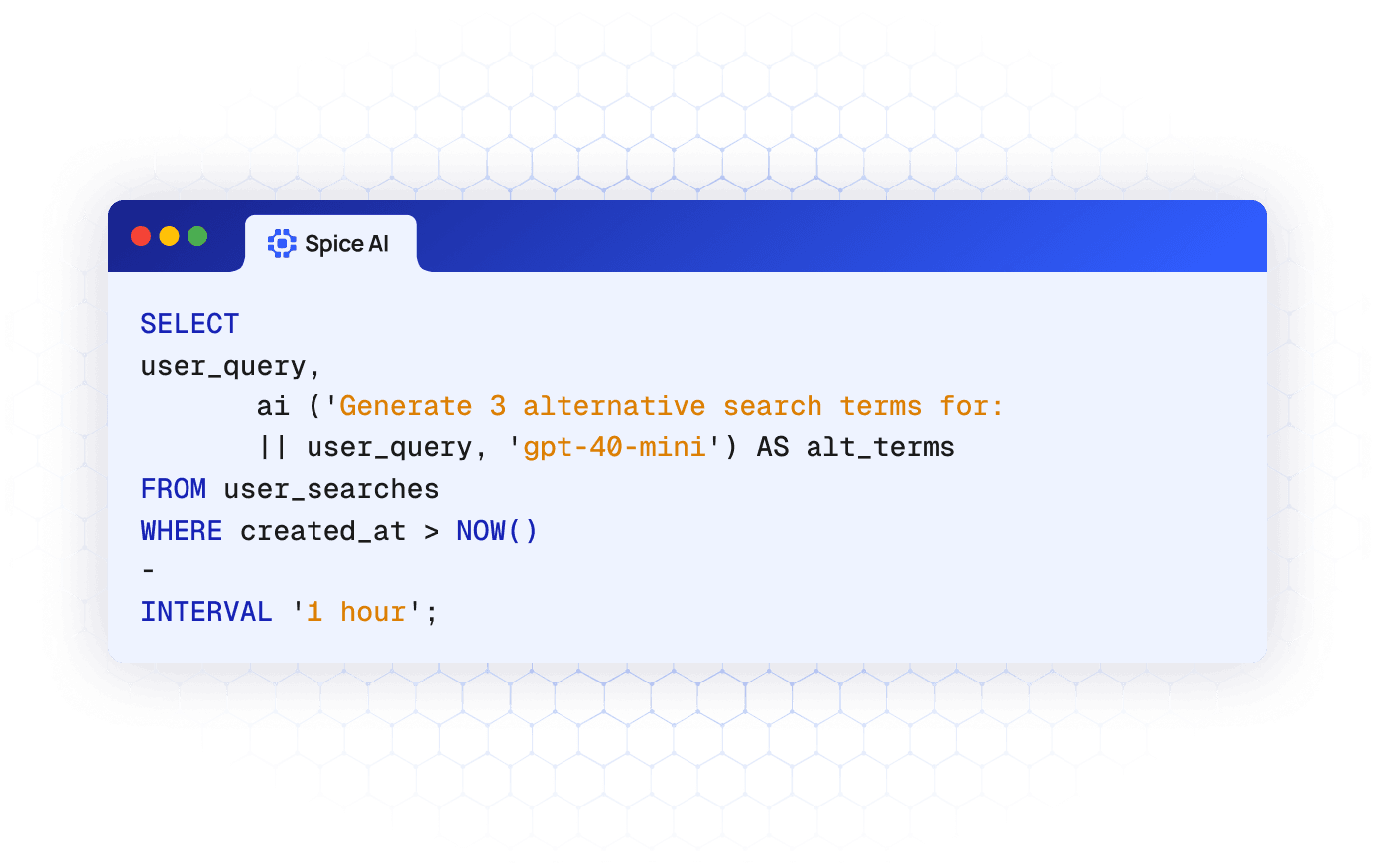

Bring AI to your SQL queries

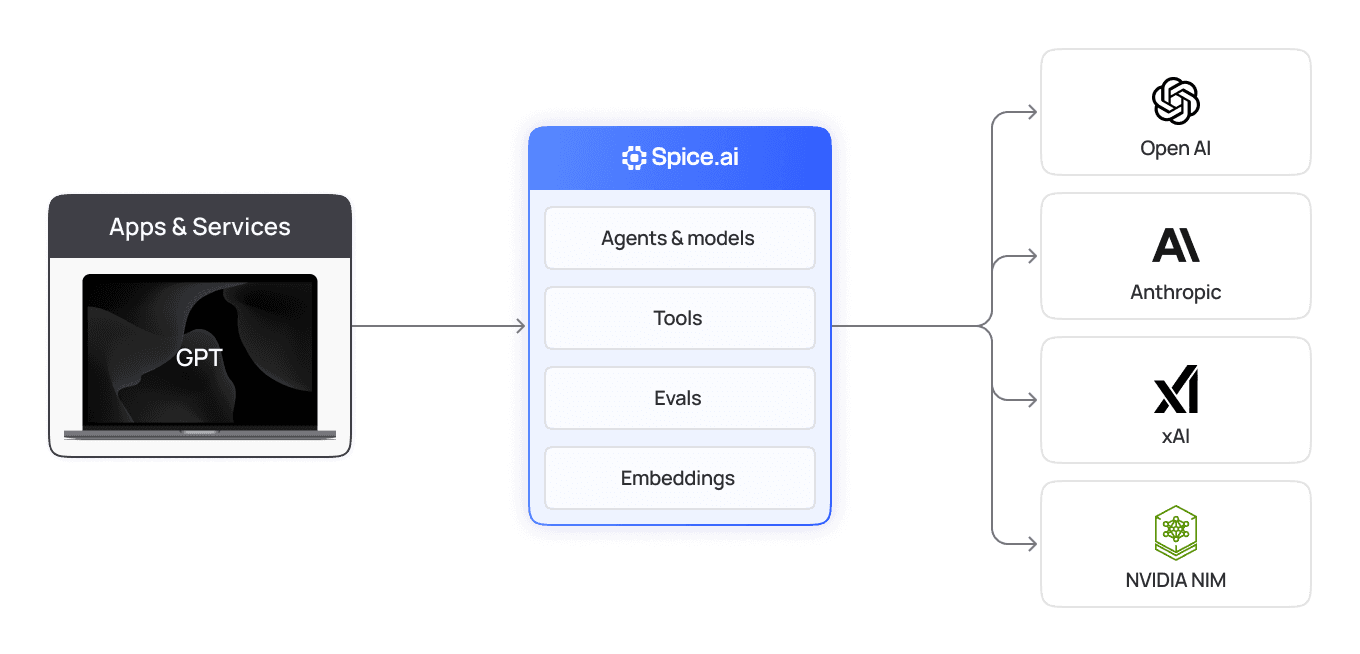

Spice transforms SQL into your interface for AI. Call models like OpenAI, Anthropic, or Bedrock with the AI() SQL function to generate text, classify data, and enrich results.

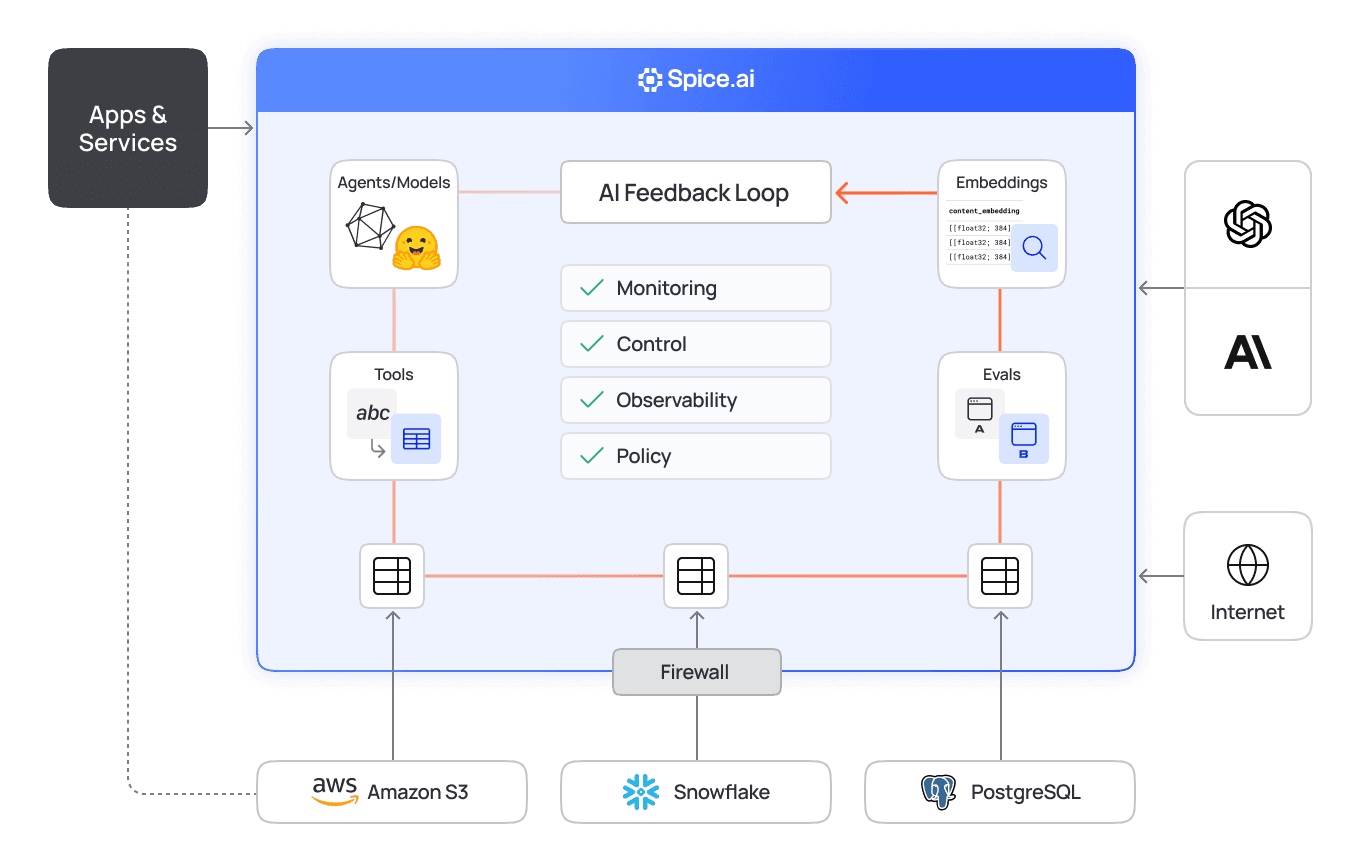

AI engine designed for developers

Spice makes AI a native part of SQL with built-in model functions, prompt execution, and inference orchestration.

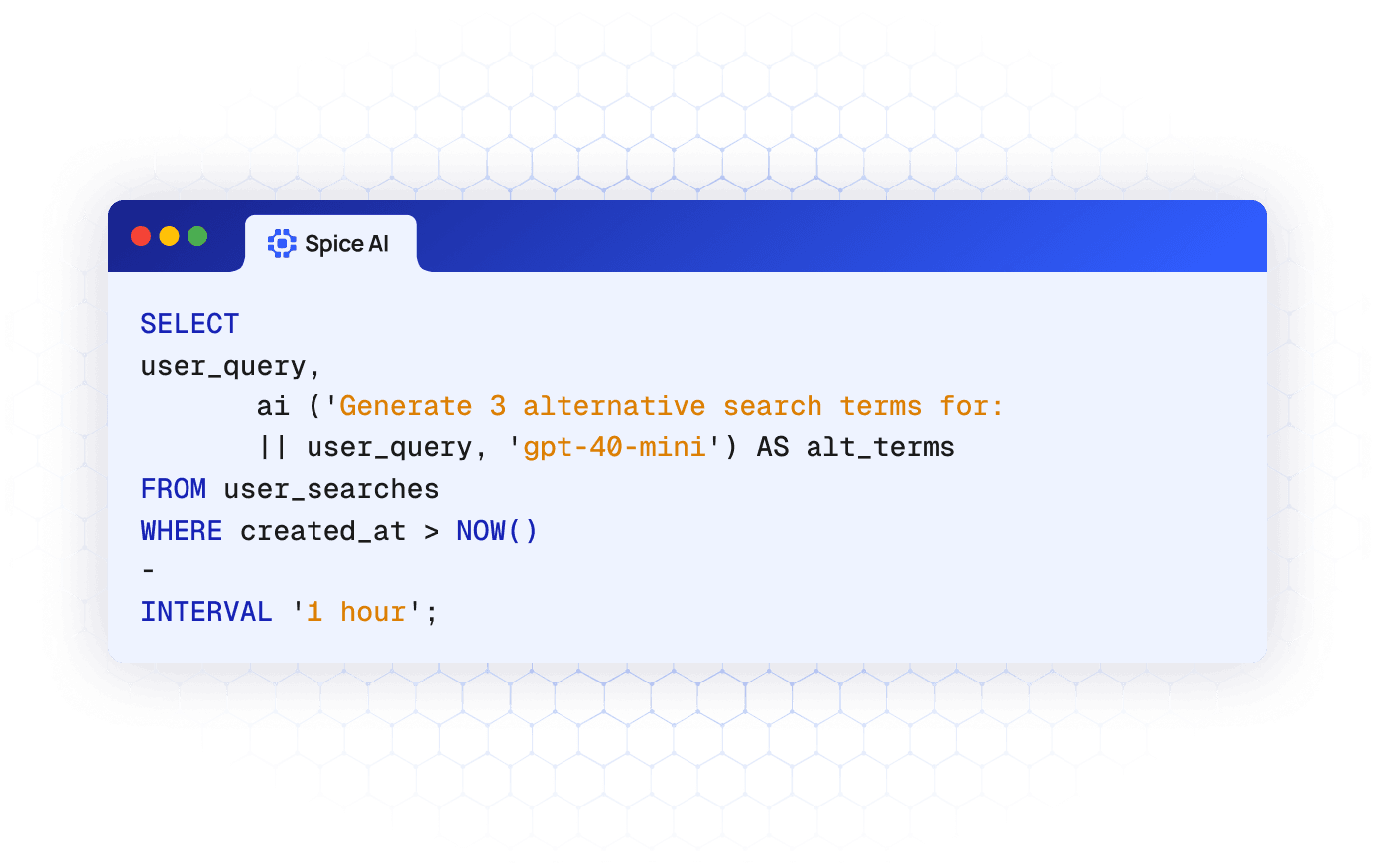

AI(), the SQL Function for LLMs

Use the AI() SQL function to query OpenAI, Anthropic, Bedrock, or any custom LLM endpoint. Spice handles tokenization, context management, and streaming responses.

Explore the AI() function

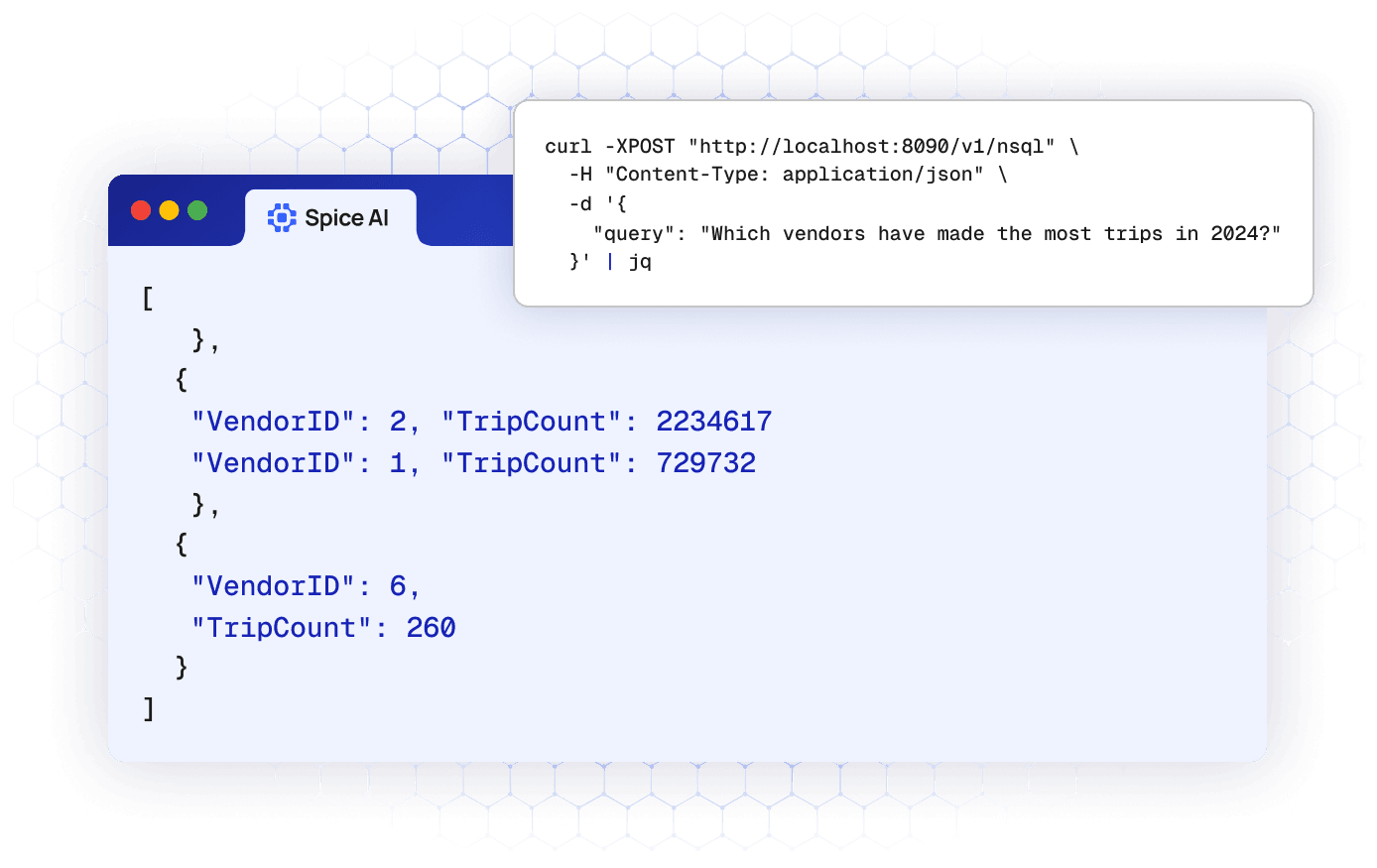

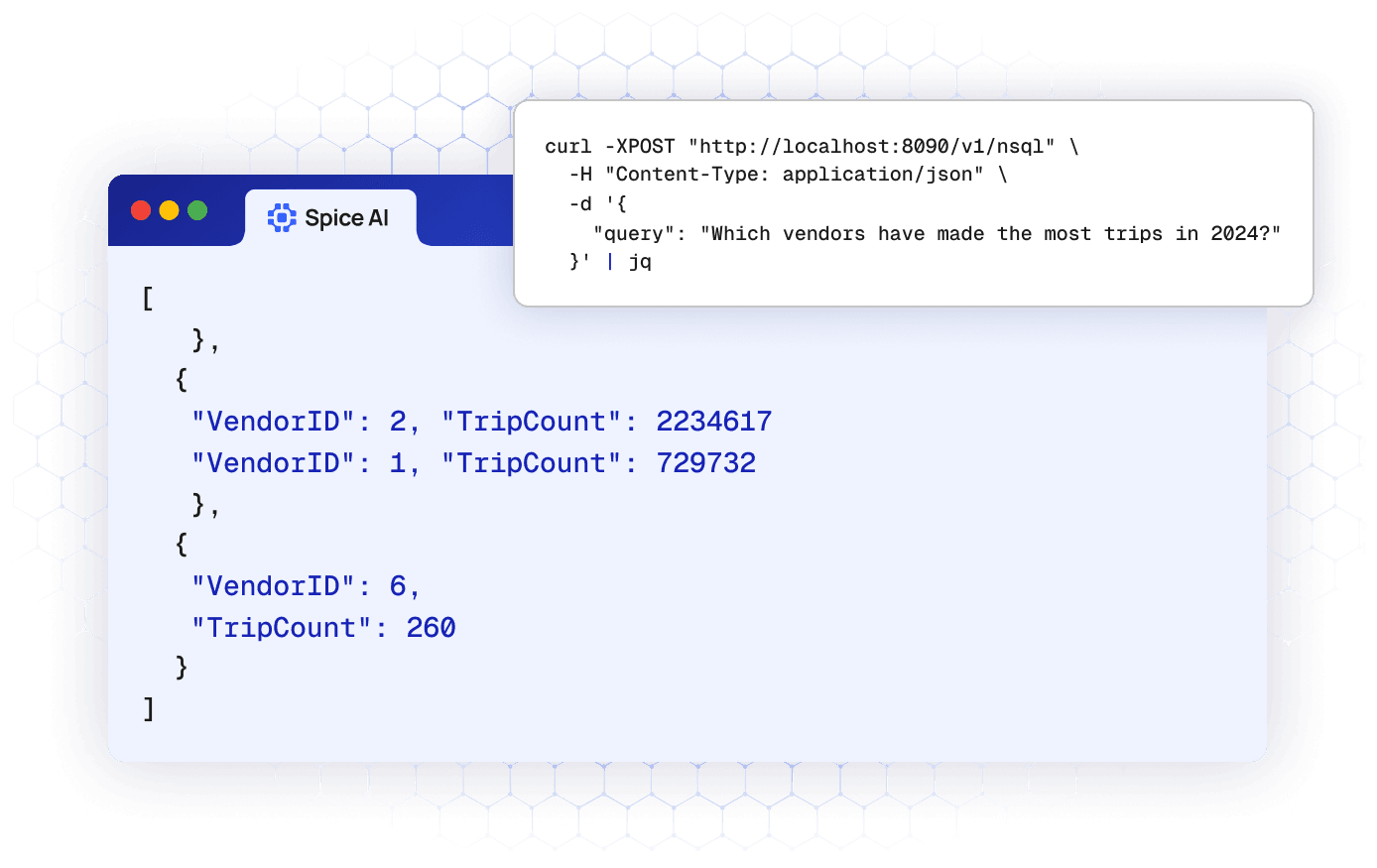

Native Text-to-SQL generation

Use natural-language prompts to generate SQL queries automatically. Spice leverages an LLM to interpret intent and produce valid, optimized SQL against your connected datasets.

Explore AI model serving

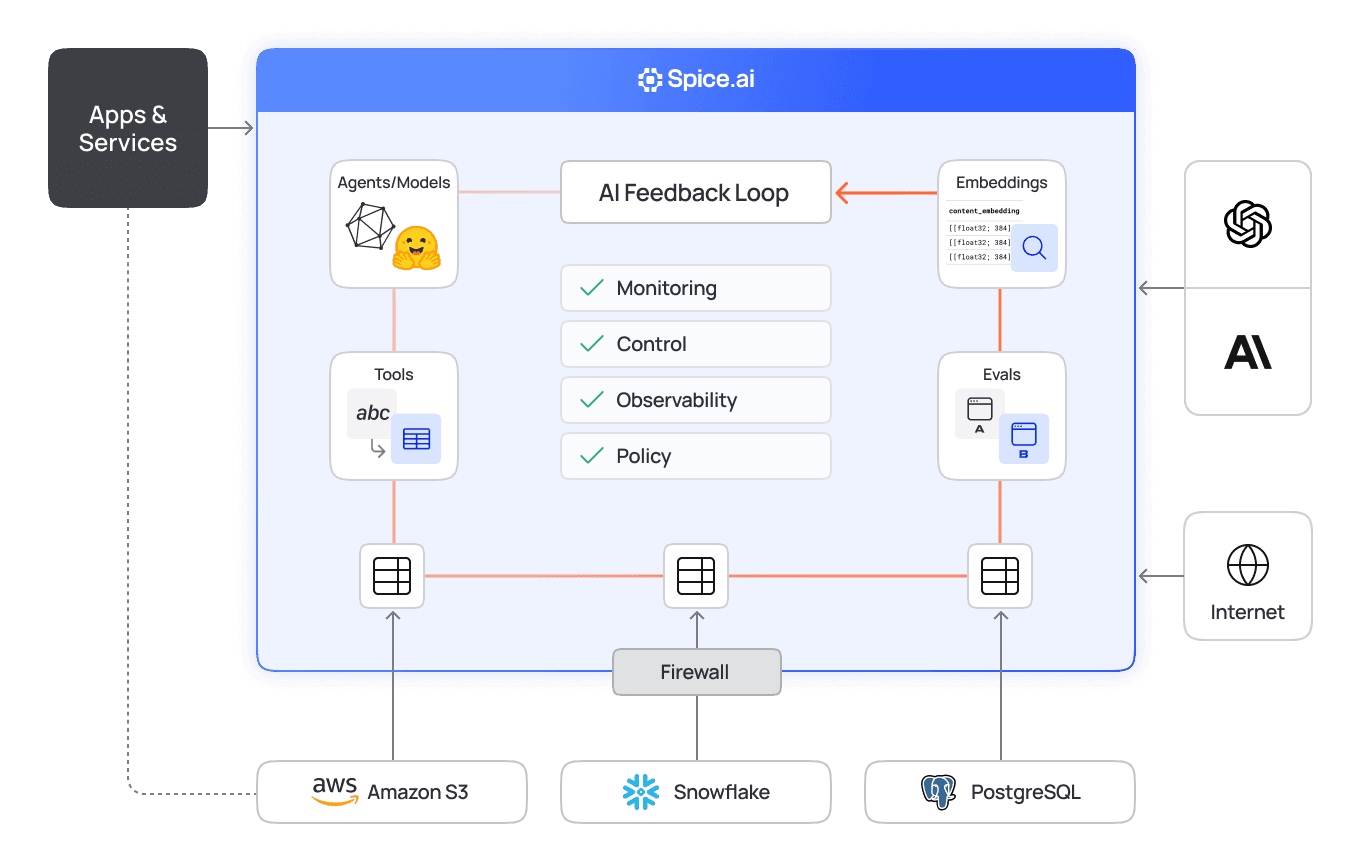

AI sandboxing for least-privilege access

Define governed AI sandboxes as intermediary access points. Spice lets teams expose only specific table, column, or row access to LLM queries without exposing production databases.

Explore secure AI sandboxing

Trusted by developers building production AI

Teams use Spice to integrate AI directly into SQL workflows-accelerating development and eliminating redundant pipelines.

“Partnering with Spice AI has transformed how NRC Health delivers AI-driven insights. By unifying siloed data across systems, we accelerated AI feature development, reducing time-to-market from months to weeks - and sometimes days. With predictable costs and faster innovation, Spice isn't just solving some of our data and AI challenges - it's helping us redefine personalized healthcare.”

Tim Ottersburg

VP of Technology, NRC Health

“Spice AI grounds AI in our actual data, using SQL queries across many data sources. This brings accuracy to probabilistic AI systems, which are very prone to hallucinations.”

Rachel Wong

CTO, Basis Set

Integrations across all of your data sources

Built-in connectors for 30+ modern and legacy sources, from Databricks and S3 to MySQL and PostgreSQL, with provider-agnostic support for major LLM APIs.

FAQs

Answers to common questions about calling LLMs from SQL

What is the AI() function?

AI() is a built-in Spice function that lets you call large language models directly inside SQL queries. It takes a prompt (and optional data columns) as input and returns model completions as query results. This allows you to summarize, translate, generate, or classify text inline without additional code or API management.

How does text-to-SQL work?

Spice uses your preferred LLM to convert prompts into executable SQL. Results are constrained to your connected datasets and subject to all existing SQL permissions and governance rules.

Can I use different model providers?

Yes. Spice abstracts model providers behind a common interface; select OpenAI, Anthropic, Bedrock, or your custom model by name in each call. This keeps your SQL portable and futureproof.

See Spice in action

Walk through your use case with an engineer and see how Spice handles federation, acceleration, and AI integration for production workloads.

Talk to an engineer